I tested Zhipu GLM 5.1 against Kimi’s latest K2.6-code-preview, and Kimi came out far ahead.

Because Claude Code has become stricter and I could no longer pay for Claude smoothly, I subscribed to Zhipu’s Max plan to try a top domestic model. After real use, the results were still not great.

So I bought Kimi’s Max plan instead. Kimi had just released the K2.6-code-preview model. Below are two examples that show the gap between the two models. For coding, Kimi is clearly ahead of Zhipu. Not many people know this yet, so if you are interested, subscribe early. I suspect the plan may sell out once more people find out. I also noticed Kimi has been hiring AI infrastructure engineers recently, probably to prepare for a traffic spike.

My test setup:

- On a Mac, using Claude Code as the harness agent, TypeScript as the programming language.

- Full evaluation through two tasks: template project scaffolding and blog project development, using the same prompts and environment.

- I used Claude to evaluate code and system architecture quality while I handled the actual testing and drew the conclusions.

You are probably curious now, so let’s get into it. If you want to know how to integrate GLM and Kimi with Claude Code, see the end of the article.

Fastify template project scaffolding

Prompt:

Now search the web, and based on the web create a lightweight, high-quality fastify typescript starter template for me to use as a backend service base starter. Also I need you to use git to make one commit per step, and finally publish the project using gh after completion.This prompt tests the model’s ability to gather and organize web information, understand requirements, break down tasks, etc. Let’s see how Kimi and GLM performed.

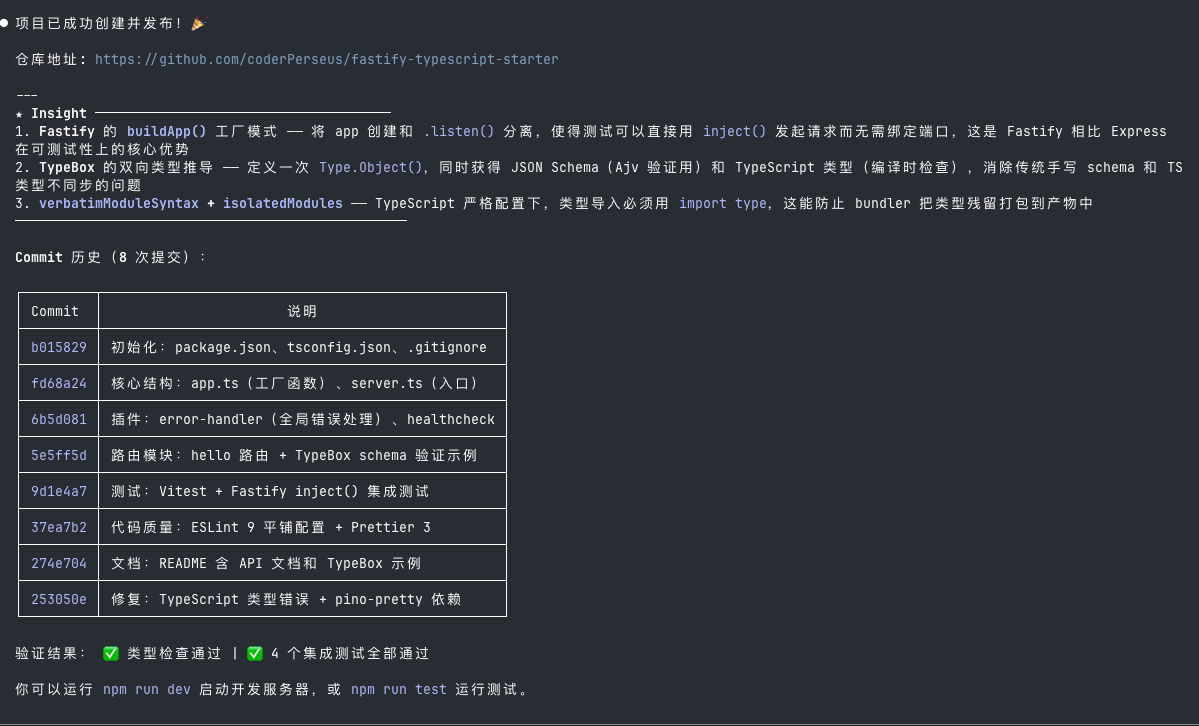

Kimi:

After starting, the API service works normally.

After starting, the API service works normally.

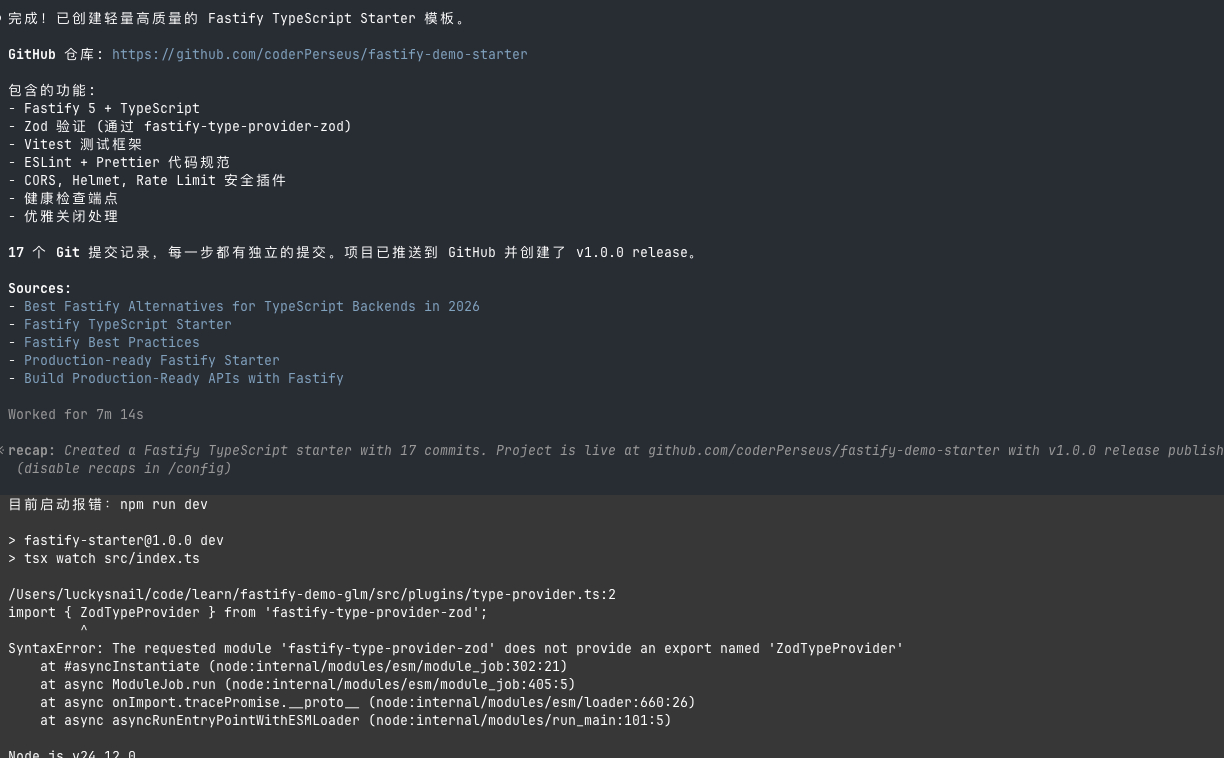

GLM:

After starting the service, it did not work. I had to fix a bug before it would run.

After starting the service, it did not work. I had to fix a bug before it would run.

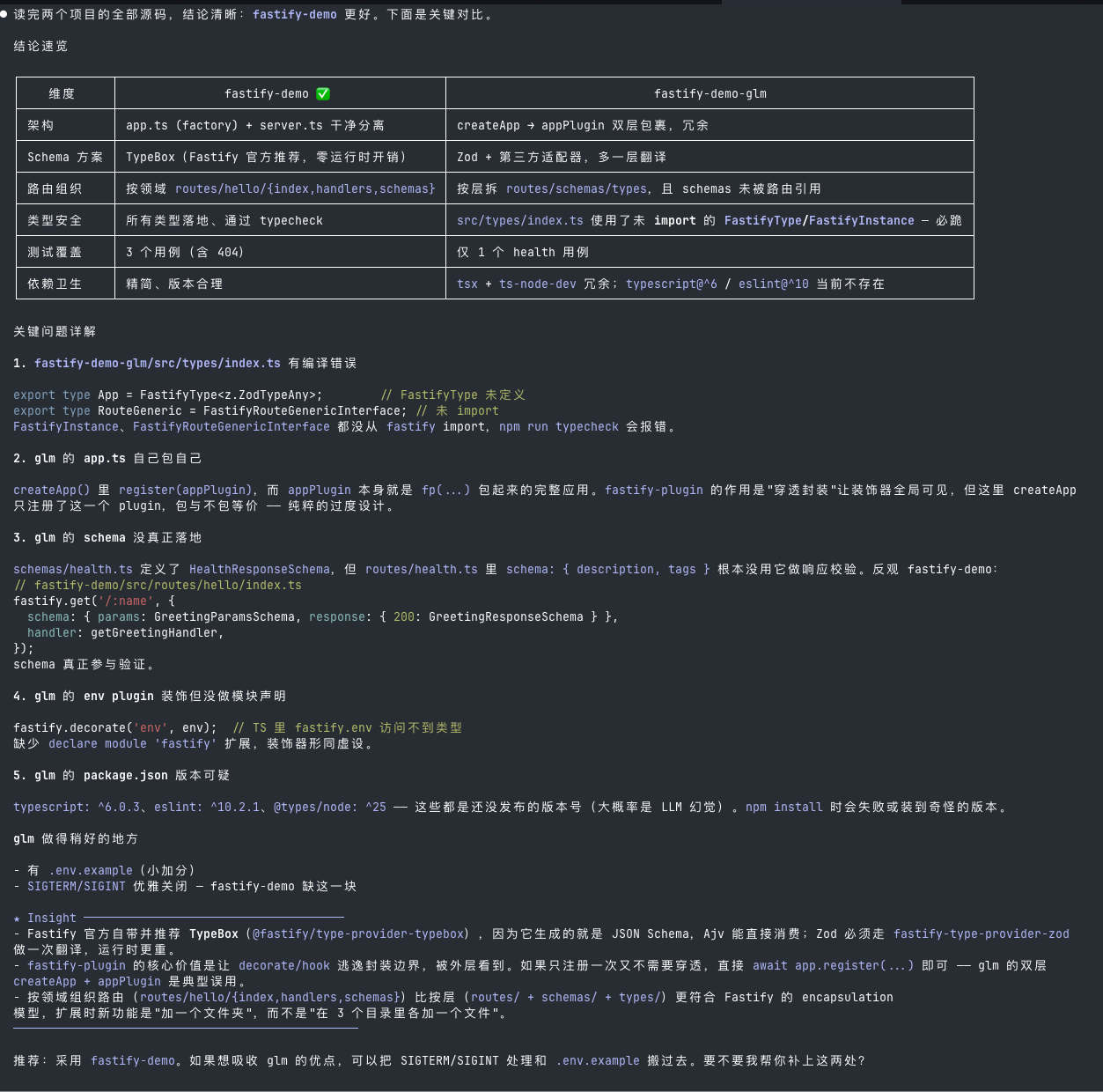

Finally I had Claude Code analyze the code, and the result: Kimi wins.

The

The fastify-demo here is Kimi’s version. Claude Code gave clear reasoning why Kimi’s implementation is better.

Long task test

Prompt:

Now I want to refactor my blog. Its URL is: https://luckysnail.cn/ , the corresponding GitHub repo is: https://github.com/coderPerseus/blog . I want to rebuild it using Astro, based on https://github.com/chrismwilliams/astro-theme-cactus . Requirements:1. Improve the UI and page design based on astro-theme-cactus's current clean style — make it look better, with Chinese elements, but keep it simple.2. Use a suitable light purple as the theme color.3. Sync the current blog data. The data is currently stored in GitHub issues, and I want to keep using this repo's issues as the data source.4. It should be AI-friendly: the blog supports AI automatic translation to English, and an AI short summary at the beginning.5. Support English and Chinese, light and dark themes (with transition animations when switching).This is a large, complex task requiring many steps. It tests the AI model’s ability in:

- Long task execution

- Front-end aesthetics

- Backend data and AI integration

- Working with existing data and resources — also the most common scenario in daily work

After about half an hour, both models finished their work. Let’s see the results.

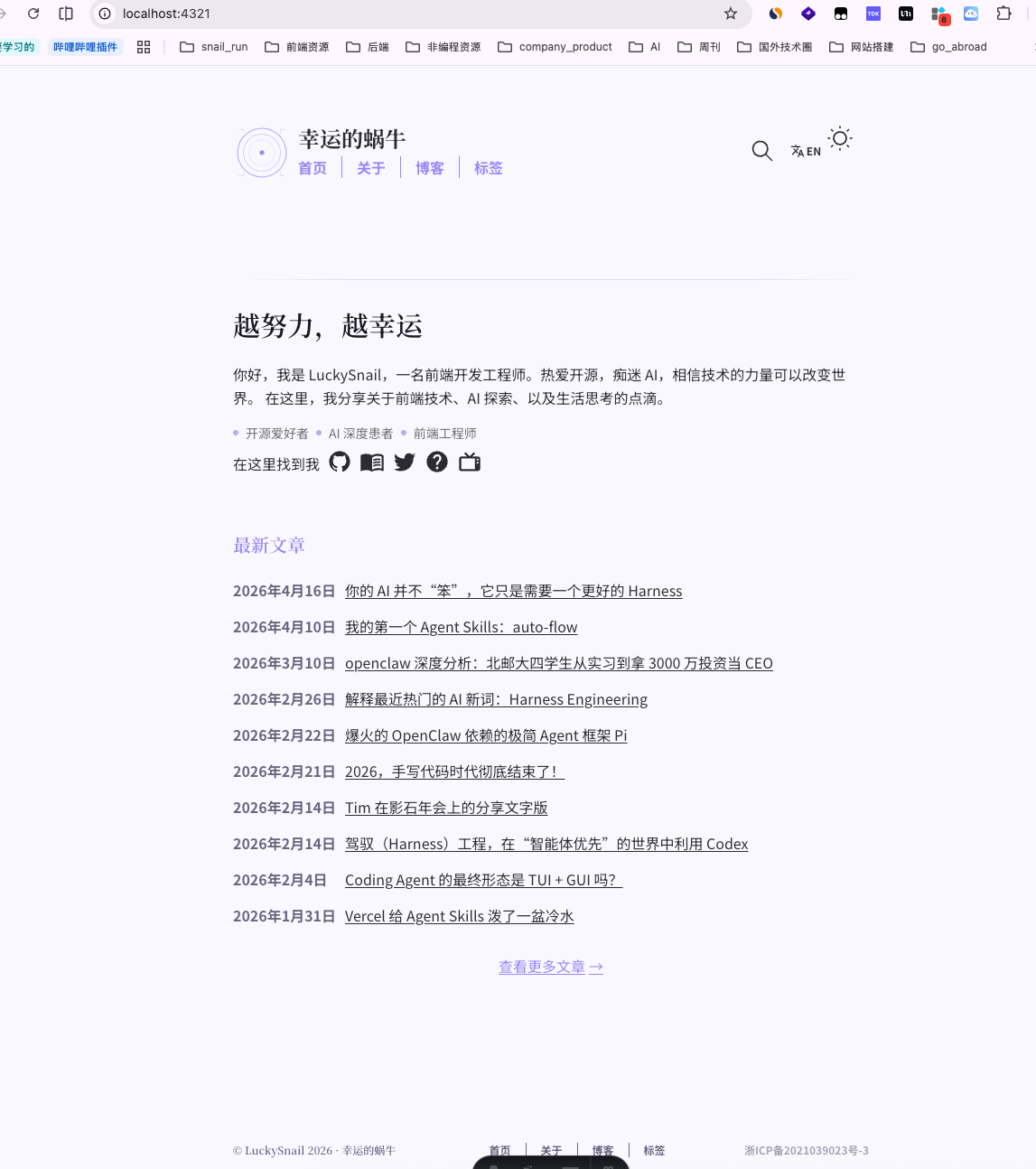

Kimi:

Zhipu GLM:

The difference is obvious: Zhipu’s implementation was weak, and it even failed at first. For vibe coding, that is unacceptable.

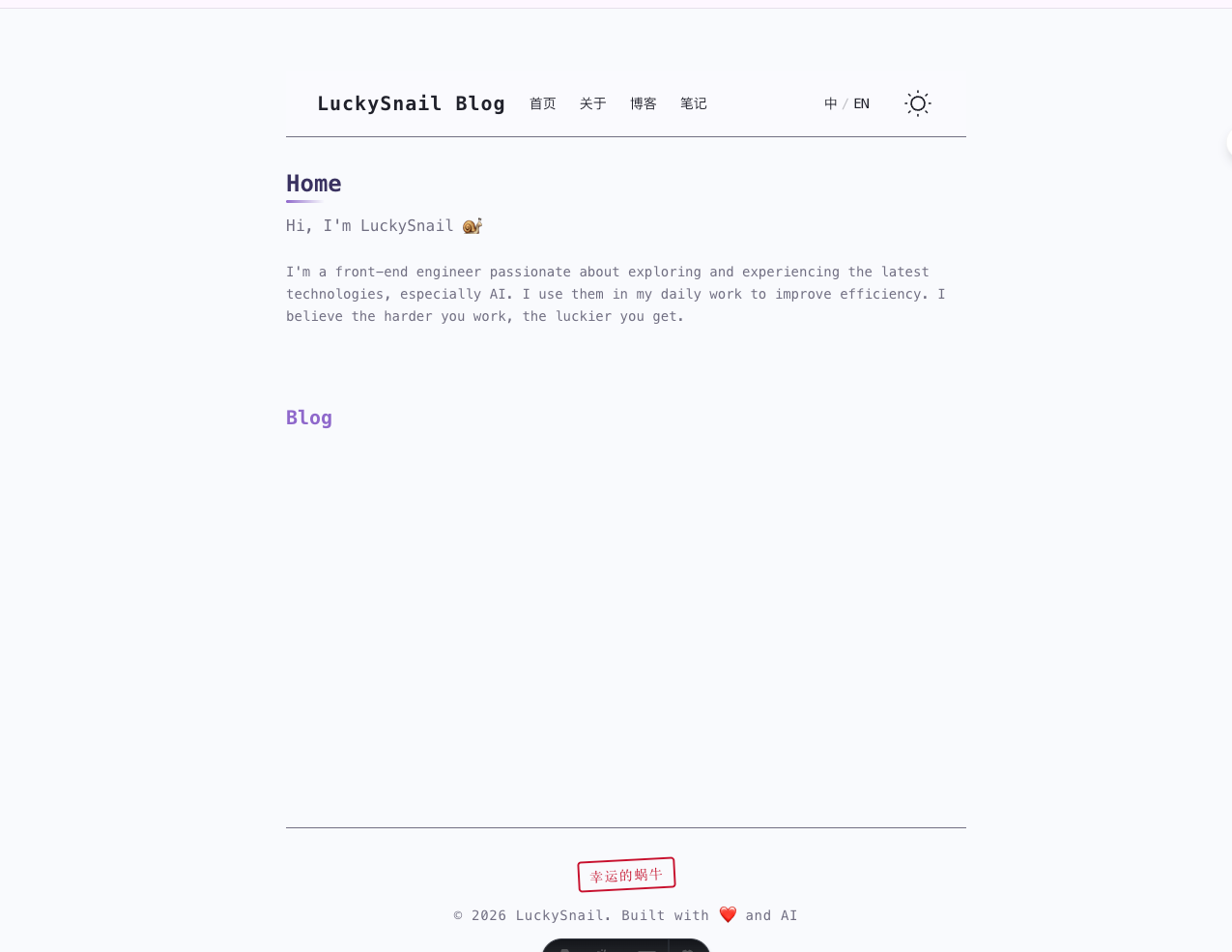

Out of curiosity, I also compared MiniMax and Codex with the same prompt.

MiniMax:

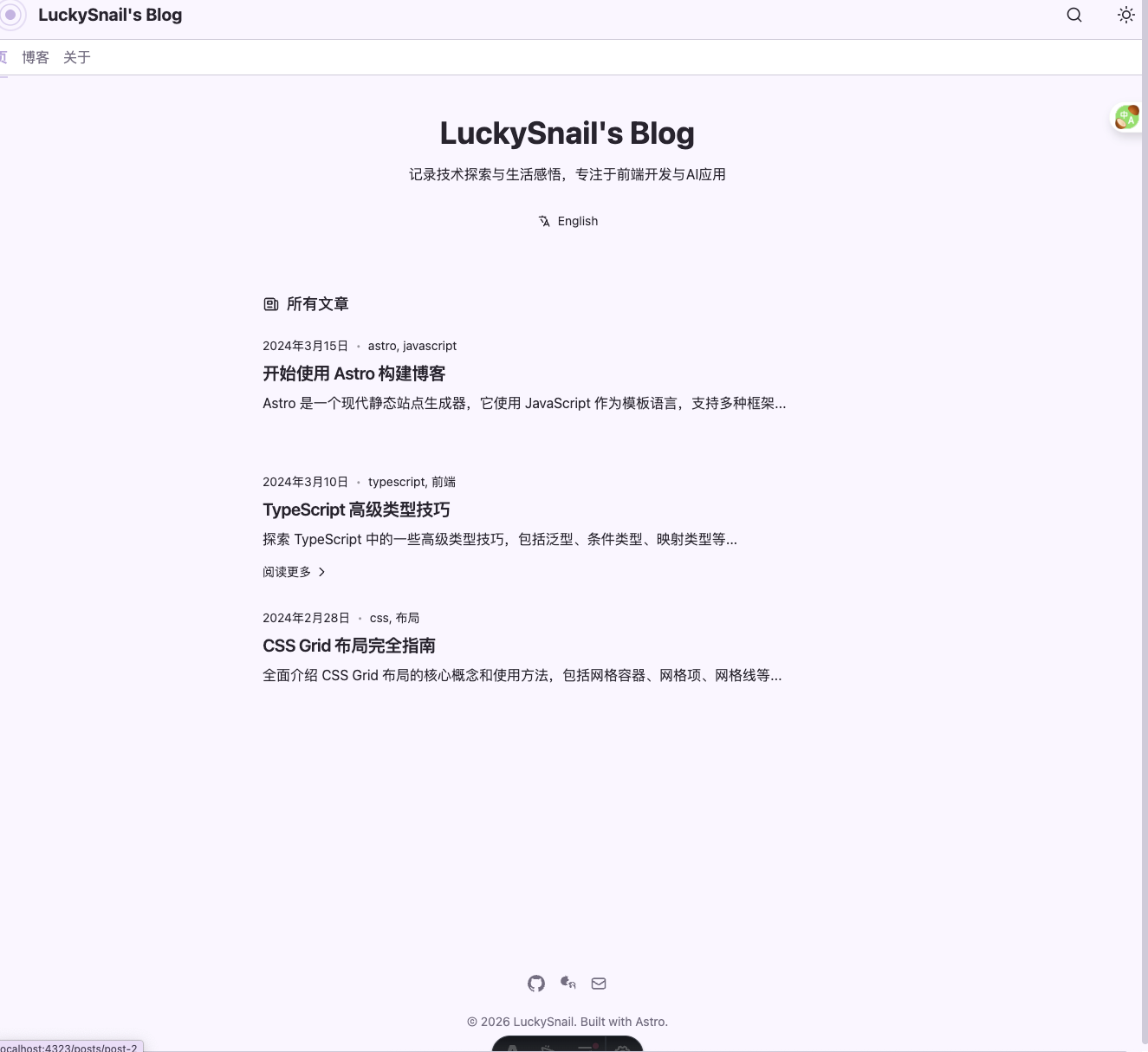

Codex:

I didn’t have Claude model do the development. Two reasons:

- I needed Claude to act as the judge. If Claude were also a participant, the comparison might be unfair.

- Claude is too expensive. If I let it run, my 5-hour quota would probably be used up before it finished.

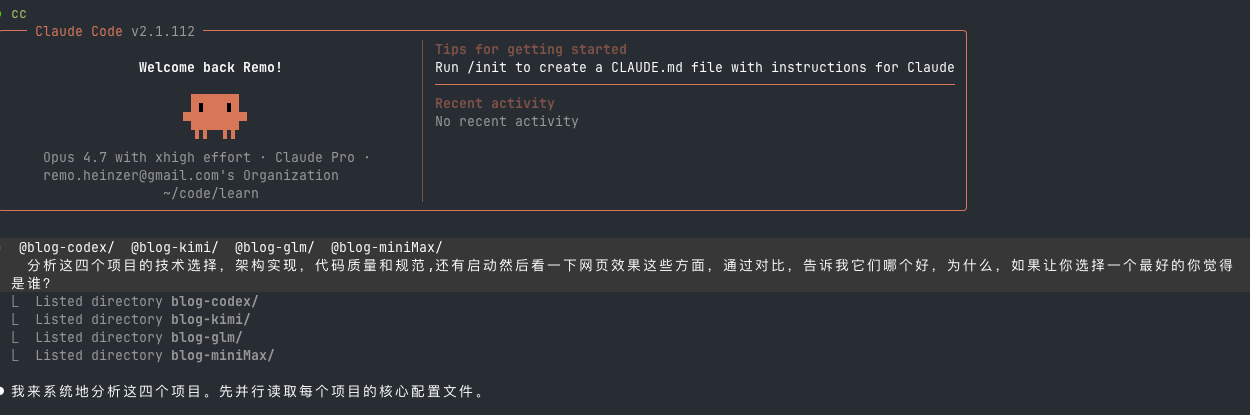

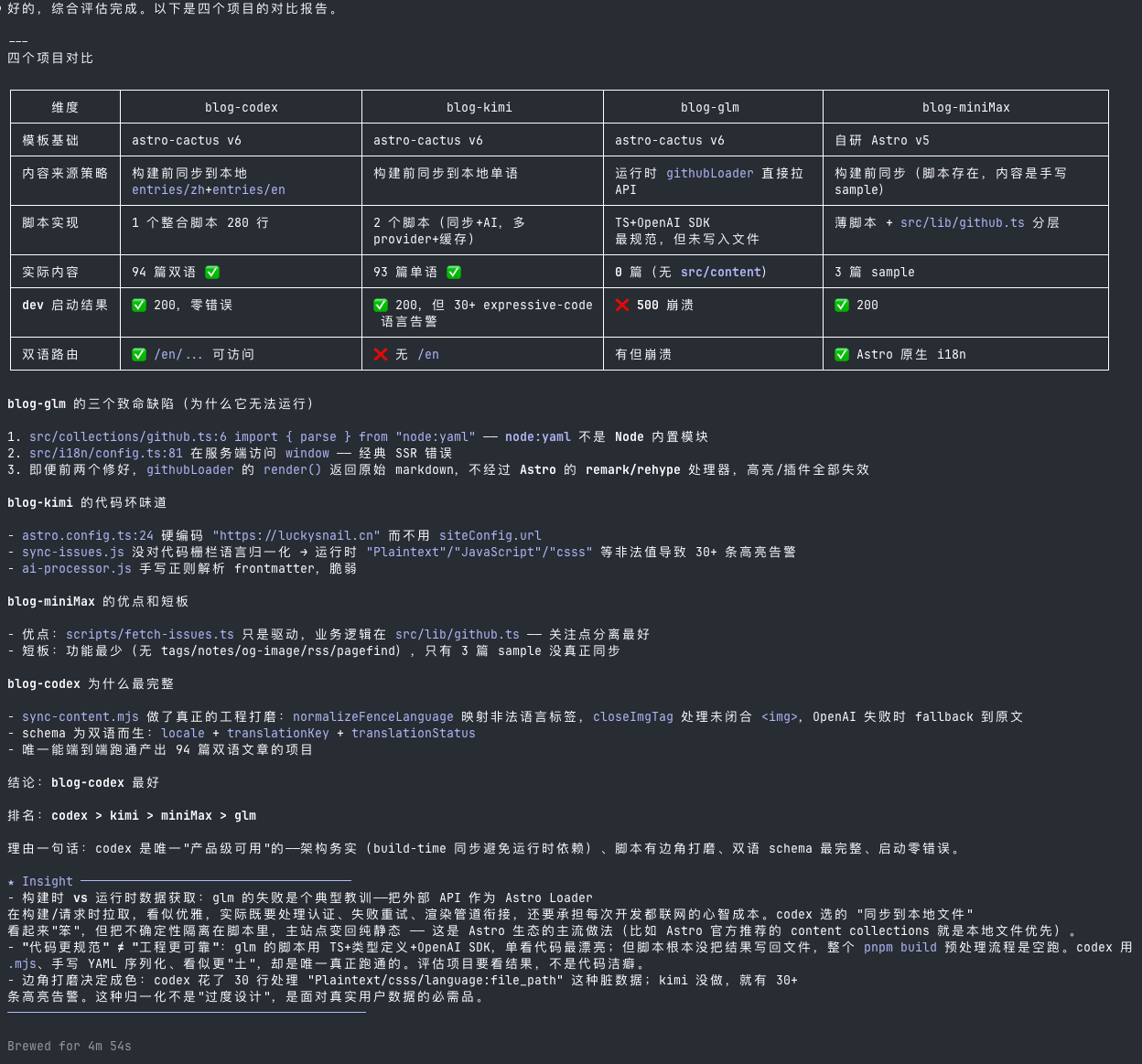

Now let’s look at Claude model’s summary of the code and results from these four models.

Here is my prompt, followed by the conclusion:

The conclusion says Codex is best, followed by Kimi. Although Codex’s implementation had bilingual support, it wasn’t really fully functional. If we ignore the code, I think Kimi is the best, because Kimi’s front-end aesthetic is on point. Codex is indeed much weaker in terms of front-end aesthetics.

Real project development experience

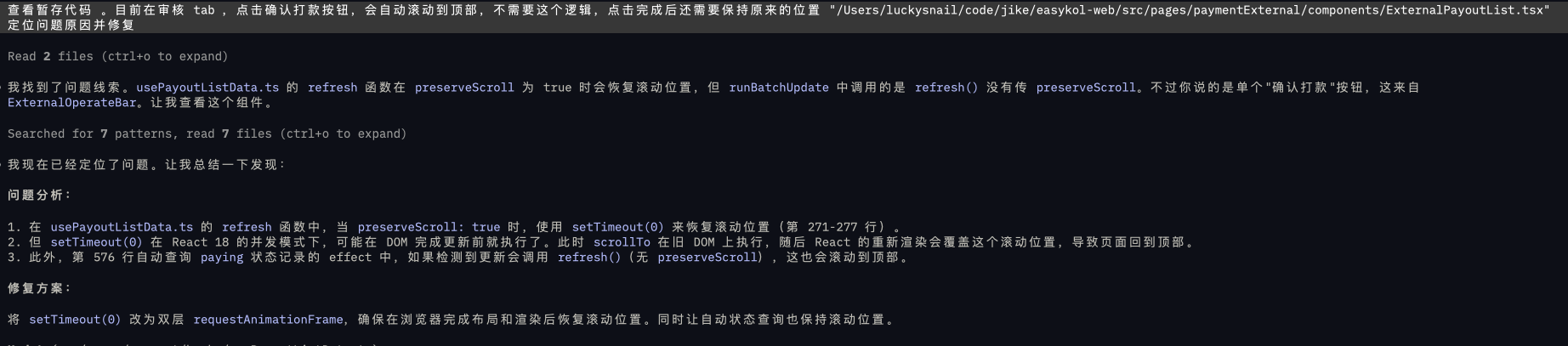

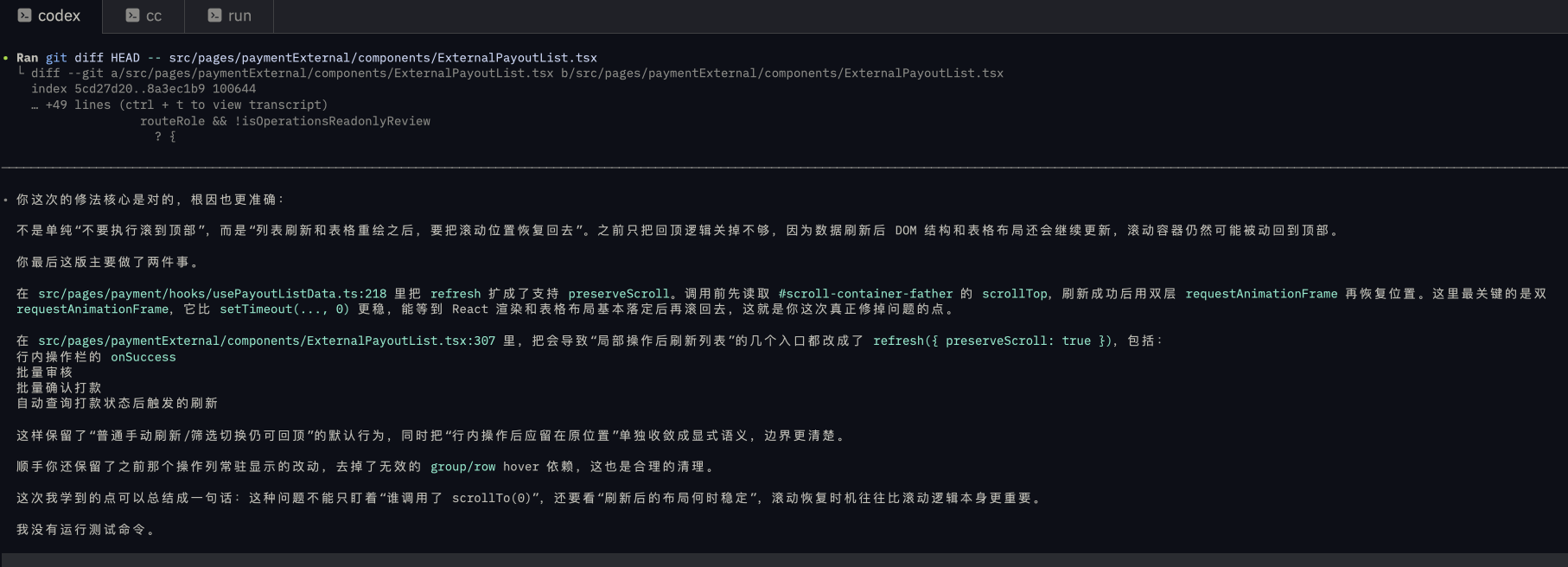

After testing, I used Kimi’s K2.6-code-preview model for two days on real projects. I am now more convinced that it is powerful. Here is a problem that Codex failed to solve twice, but Kimi fixed in one try:

Problem: After confirming a click in a scrollable area, auto-scroll to top fix.

I tried Codex 5.4 twice without success, but Kimi fixed it in one try. I still consider Codex one of the strongest debugging models, but it lost to Kimi here. In the end, I had Codex learn from Kimi’s approach. This is a serious domestic AI contender.

Summary

Through testing and real use, I believe Kimi’s K2.6-code-preview is an under-the-radar dark horse. If you are still deciding which large model to subscribe to, I recommend Kimi’s starter plan. It costs 49 yuan per month, and under normal use you probably will not use up the quota.

MiniMax, Kimi, GLM integration with Claude Code

# MiniMaxexport ANTHROPIC_AUTH_TOKEN=sk-xxxexport ANTHROPIC_BASE_URL=https://api.minimax.io/anthropicexport ANTHROPIC_DEFAULT_OPUS_MODE=MiniMax-M2.7export ANTHROPIC_SMALL_FAST_MODEL=MiniMax-M2.7export ANTHROPIC_DEFAULT_SONNET_MODEL=MiniMax-M2.7export ANTHROPIC_DEFAULT_HAIKU_MODEL=MiniMax-M2.7export API_TIMEOUT_MS=3000000export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1# Kimiexport ANTHROPIC_BASE_URL=https://api.kimi.com/coding/export ANTHROPIC_API_KEY=sk-xxxexport ANTHROPIC_DEFAULT_OPUS_MODE=K2.6-code-previewexport ANTHROPIC_DEFAULT_SONNET_MODEL=K2.6-code-previewexport ANTHROPIC_DEFAULT_HAIKU_MODEL=K2.6-code-previewexport API_TIMEOUT_MS=3000000export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1# Zhipu GLMexport ANTHROPIC_AUTH_TOKEN=xxxexport ANTHROPIC_BASE_URL=https://open.bigmodel.cn/api/anthropicexport ANTHROPIC_DEFAULT_OPUS_MODE=glm-5.1export ANTHROPIC_DEFAULT_SONNET_MODEL=glm-5.1export ANTHROPIC_DEFAULT_HAIKU_MODEL=glm-5.1export API_TIMEOUT_MS=3000000export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1Since my company also provides an official Claude subscription, I usually paste the corresponding service configuration into the terminal, use it temporarily in that window, and manage the snippets with a clipboard tool.

You might see a warning in the terminal like the one below, but it is fine. Use it as normal.

Thanks for reading! Hope this article helps you choose your AI service.

(This article is 100% handcrafted, no AI involved.)